Now that the Audiophile’s Analyzer is complete how can all of the features be provided to the Musician’s Workbench?

Simply backporting the code would not be the best solution, it would result in massive code duplication. Even more problematic would be the clash of design philosophies.

The Musician’s Workbench was designed to reproduce the functionality of the original SA-10 hardware. It is lean and real-time by design.

The Audiophile’s Analyzer was designed to provide every known music transcription technique, and to provide it all in a single integrated package. It is large and not real time.

The solution is to allow the Audiophile’s Analyzer to import session files from the Musician’s Workbench.

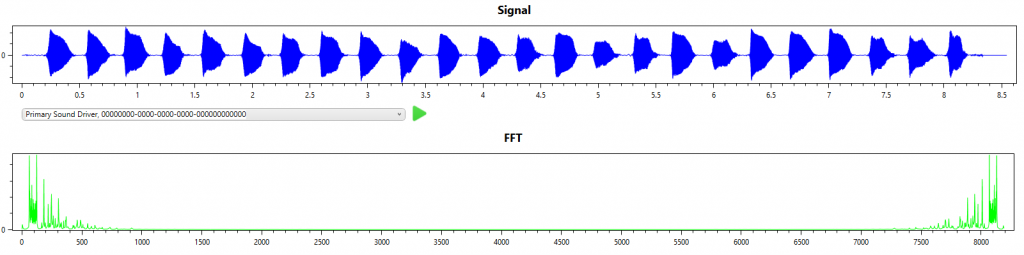

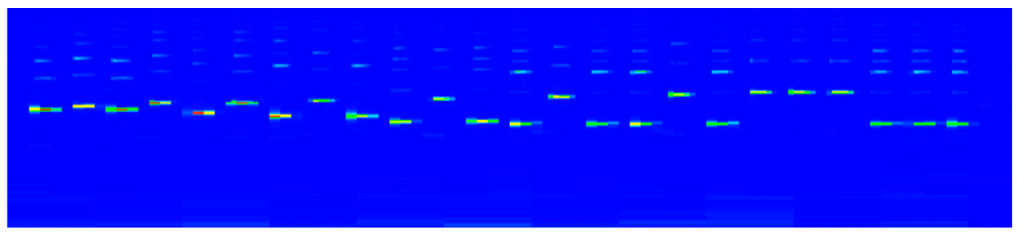

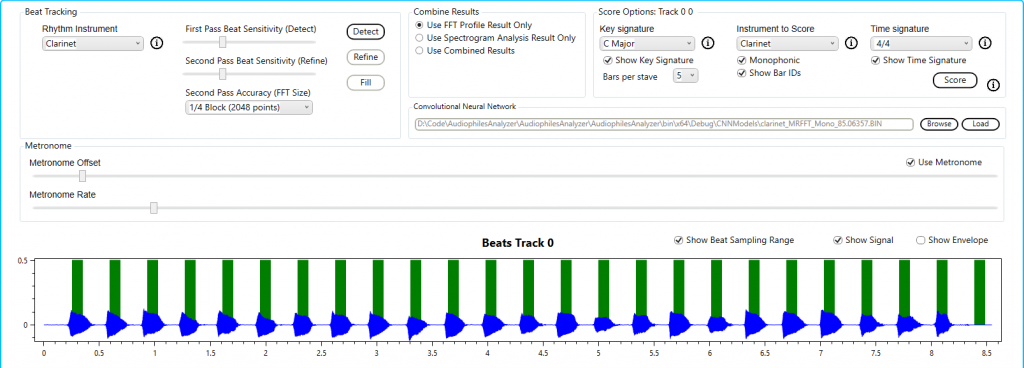

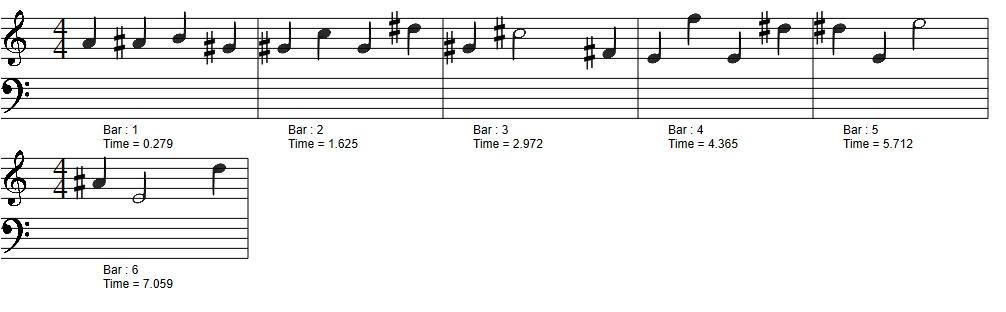

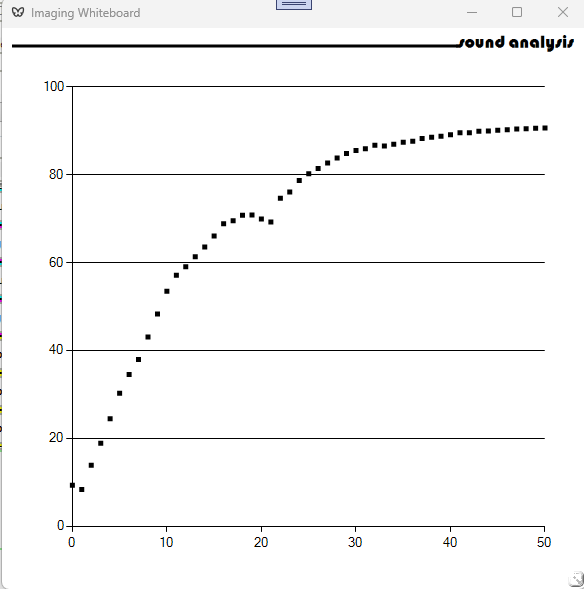

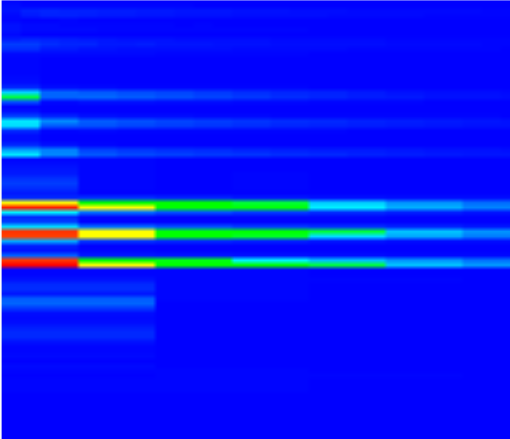

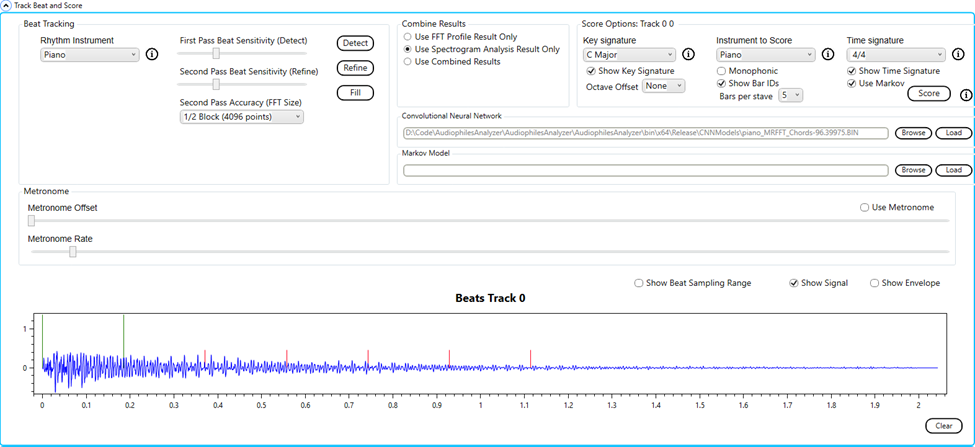

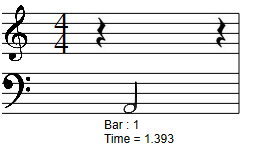

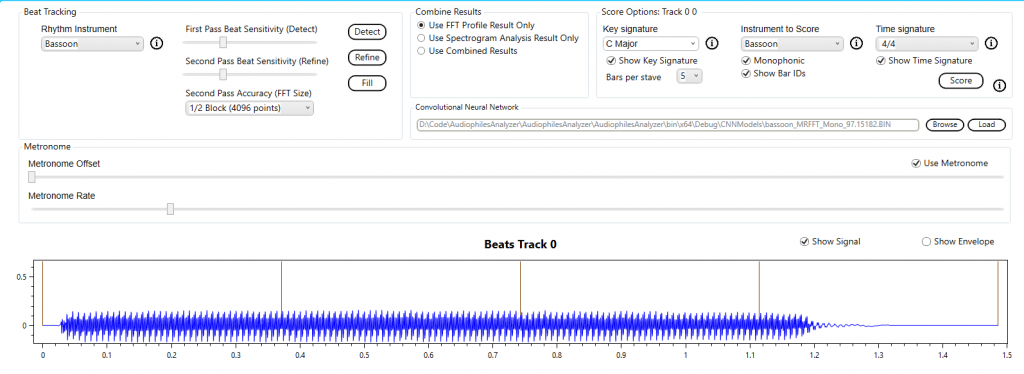

Here we see the Beats graph from the Analysis tab of the Audiophile’s Analyzer. The beats are from the session file, and were originally generated by the metronome of the Musicians workbench. The audio signal is overlaid, this is the original audio sampled by the Musician’s Workbench. The audio was only sampled on the beat when there was sound, and only enough to allow a single FFT to be performed.

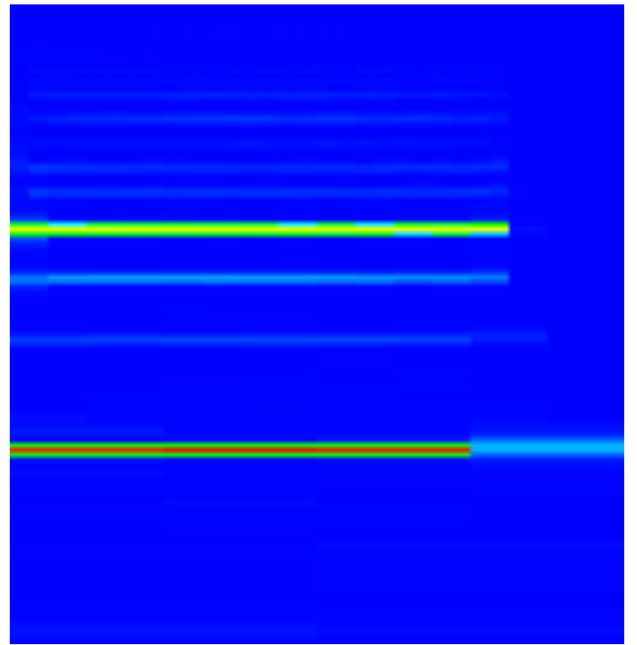

The spectrogram for the same audio is shown.

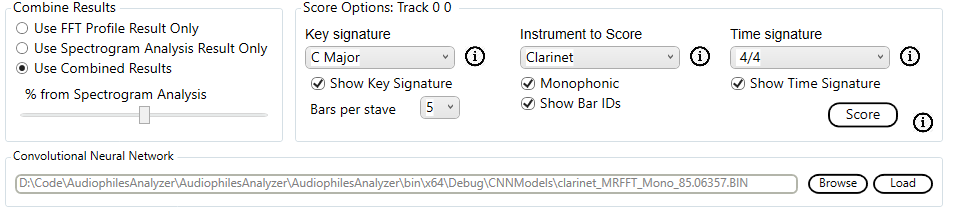

Now the user can re-transcribe the session using any of the techniques available in the Audiophile’s Analyzer including the built in CNNs. This would simply not be possible in real time as it requires 7 FFTs to be performed before the spectrogram can be sliced and sent to the CNN.

The Audiophile’s Analyzer can also provide some insight into the internals of the Musician’s Workbench which are not normally displayed. Answering questions such as, “Should I have used Delayed Sampling?”, “Did I select the best Octave Range?”.

The problem with training neural networks is always finding the training data. Samples of individual notes are available on the internet. Samples of chords are not available.

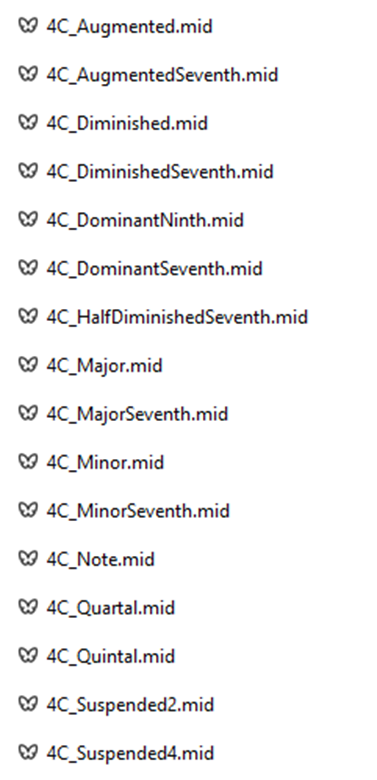

There are 16 chord types that are recognized by the Audiophile’s Analyzer. There are 96 notes in 8 octaves. So, it is possible to play 1536 different cords on a piano keyboard. It is not practical to play, record and label so many samples.

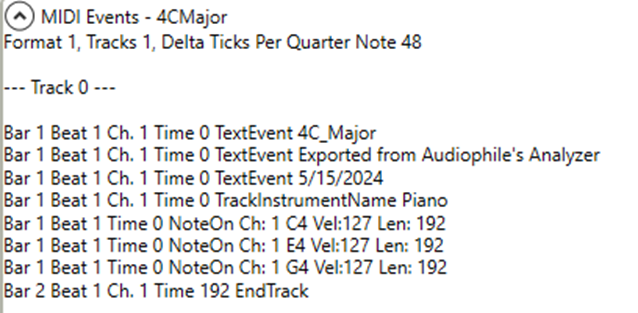

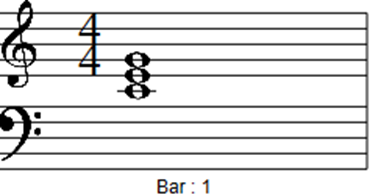

To solve this a feature has been added to the CNN tab of the Audiophile’s Analyzer which will produce MIDI files for every possible chord for a selected instrument.

These MIDI files are then converted to .wav audio files using a third-party application. When read by the Audiophile’s Analyzer a spectrogram is produced.

The Training set creation utility is then used to slice the spectrogram to create the training images.

Monochrome images are used to train the CNN, color is for humans.

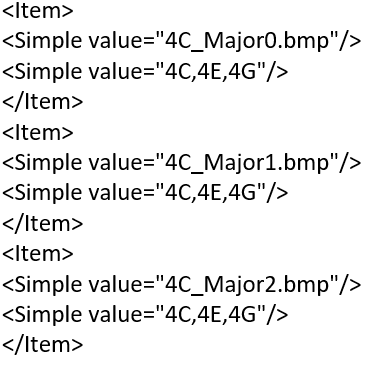

This utility will recognize the filename format and produce the labels file. Multiple labels will be applied to each file.

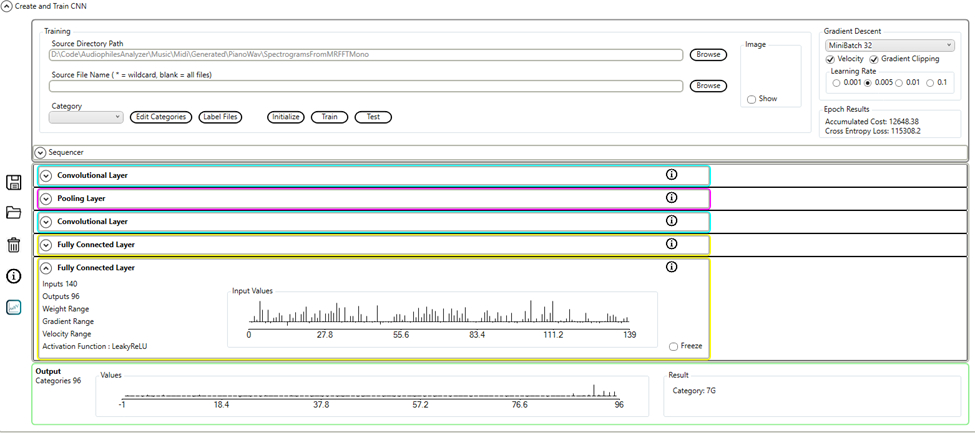

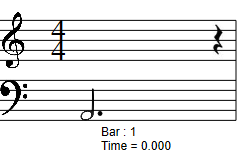

The Audiophile’s Analyzer is used to build and train a CNN using the labeled images.

The output layer of the neural network will have 96 outputs, labeled from 0C to 7B. During training the required outputs will be set for each note in the file label.

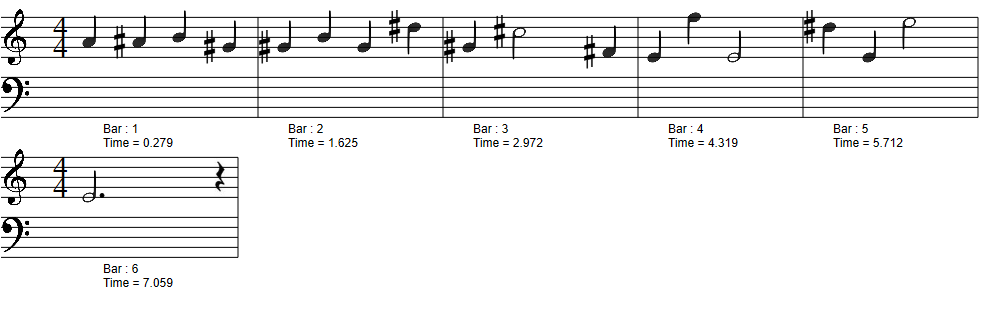

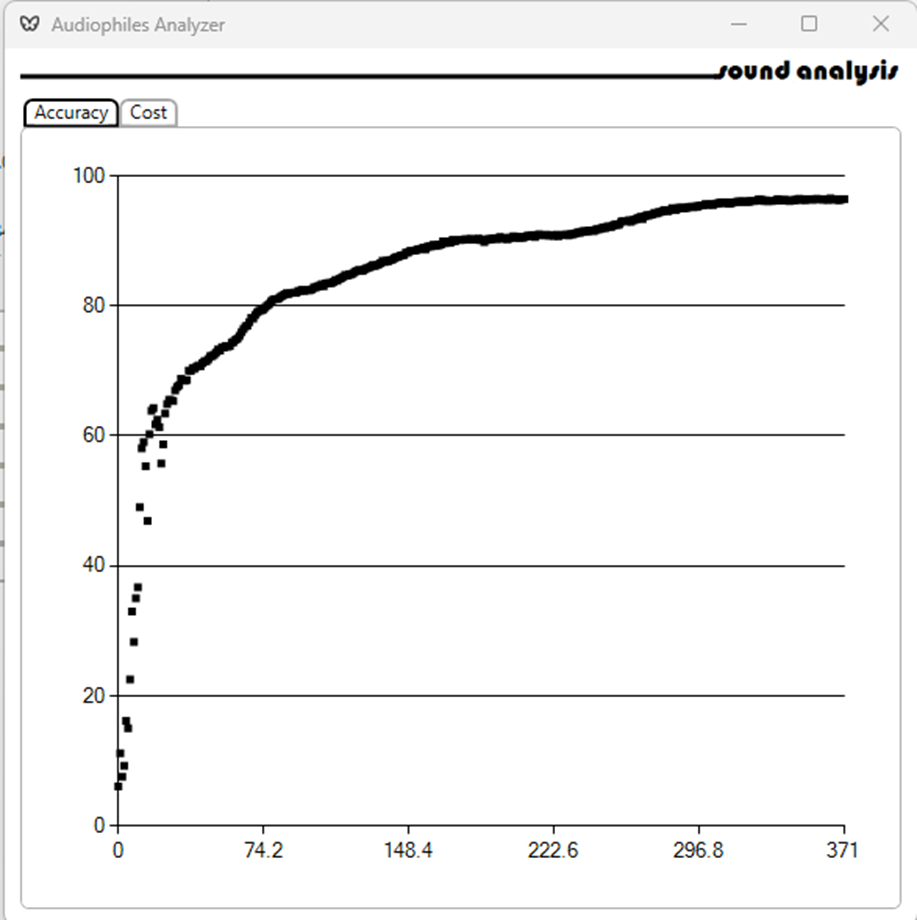

Training results are recorded:

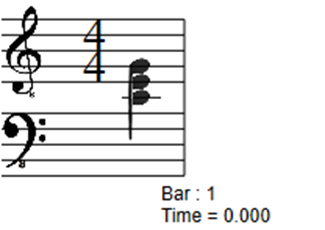

Using the Audiophile’s Analyzer to transcribe a .wav file:

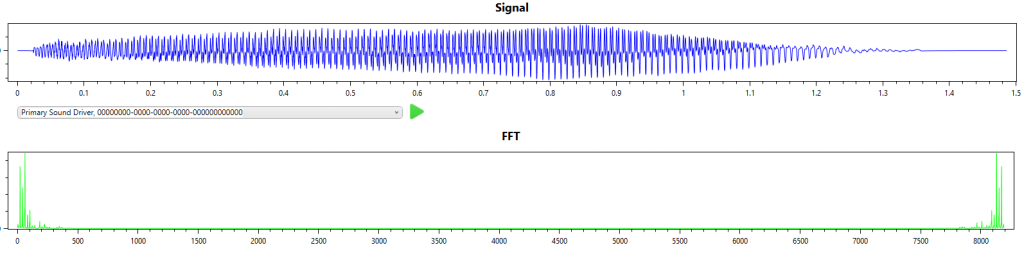

Here I perform the same task, i.e. single note (A2), this time from a cello. This is a little more difficult as the cello is a polyphonic instrument with very strong harmonics.

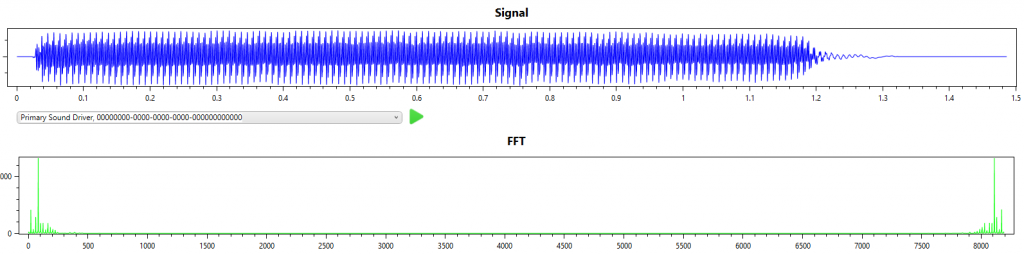

From the signal and FFT result we can see that the first overtone has more energy than the fundamental frequency.

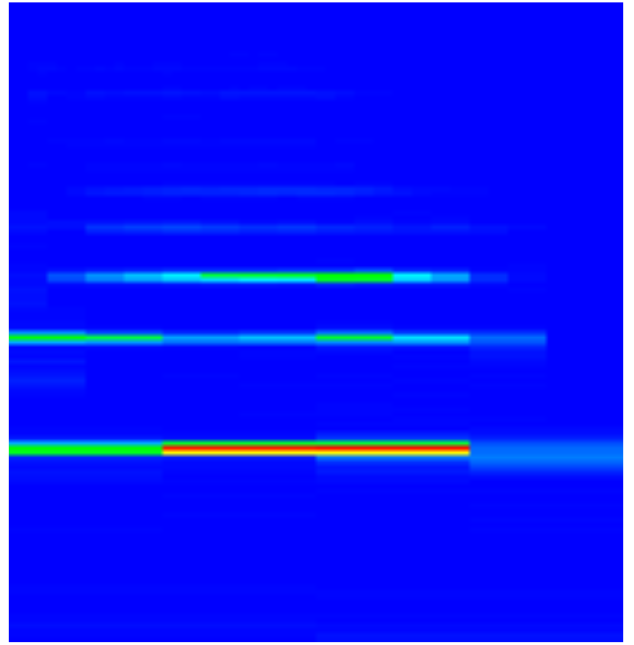

This problem is attenuated in the Multi-rate FFT spectrogram as the overtone is sampled over less time in the shorter sampled higher frequency FFT.

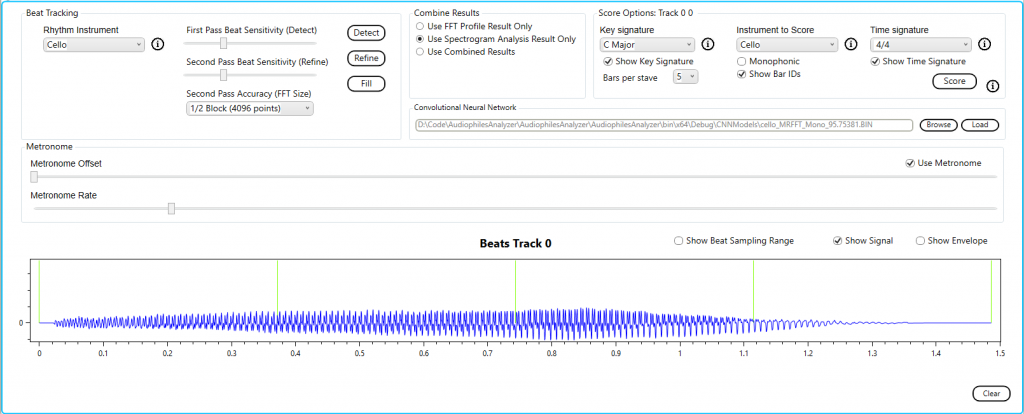

We use the built in profile for the cello.

Here we can see that as the note fades on the last beat the overtone is also transcribed.

Since we know that this was a single note the monophonic check box should be checked.

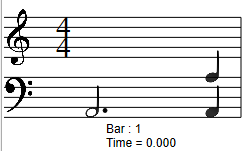

Now the result is as expected.

Using the built in Convolutional Neural Network for the cello we see leading and trailing silence; only the red line from the spectrogram has been transcribed.

The threshold for silence is calculated differently for the algorithm and the CNN. The algorithm sets the threshold on the fly during transcription based on a running average of the energy in the audio. For the CNN the threshold for silence is implemented when the spectrogram slices are prepared for training; the CNN was never trained on the parts of the note which were too quiet.

The simplest music file to transcribe is a single note. Here I use a bassoon playing a single note A (octave 2) to walk through the simplest transcription.

From the signal and FFT result we can see that this is indeed a single note with a single dominant frequency.

The spectrogram confirms the simplicity of this example.

To transcribe this note we will use the built in bassoon profile and the default options (i.e. correlation).

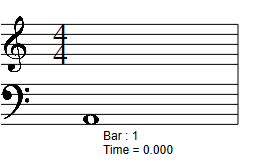

The result is as expected.

Alternatively we could have elected to use the built in Convolutional Neural Network for the bassoon.

The result is a little different. The note ends just after the forth beat. The CNN transcribes this as a 3 beat note, not 4 beats.

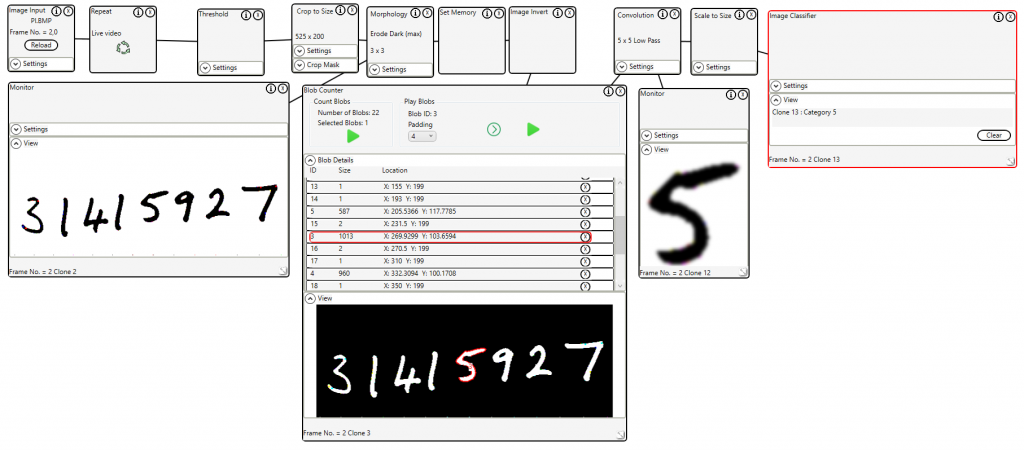

Using the MNIST database of handwritten digits MNIST database – Wikipedia a convolutional neural network was trained to an accuracy of 90%. This took 50 epochs.

The trained model was loaded into the Image Classifier control and used to identify handwritten digits.

The files required to reproduce this demo are available here https://drive.google.com/file/d/1XKSYvJfAW1maNsaiV0iaWZXor0Tbtuat/view?usp=share_link