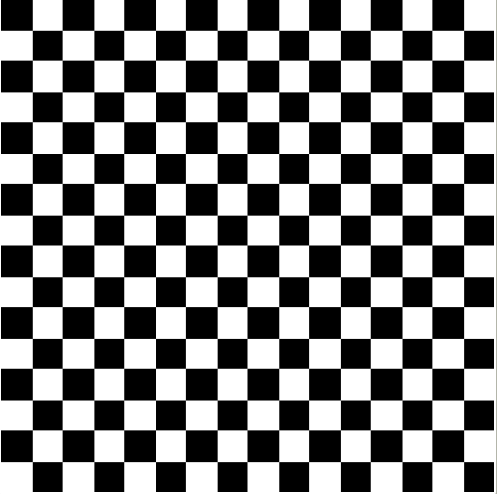

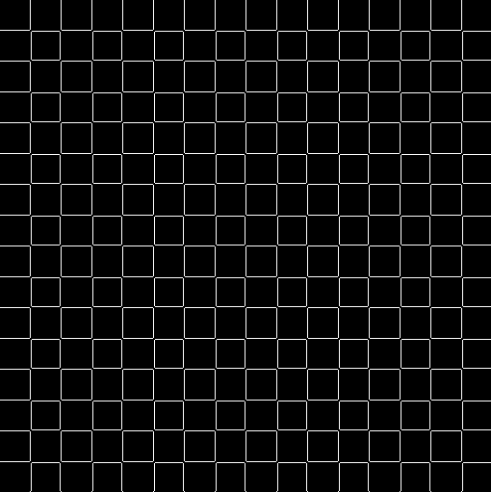

If you take a 3-dimensional object, rotate it 180 degrees in each of 3 dimensions in turn, the object will return to it’s original position.

I don’t know of an elegant mathematical proof of this; it would require the use of 3D complex numbers which do not exist (see the previous blog post ‘Using complex arithmetic to perform combination warps‘). There is an abundance of empirical evidence though.

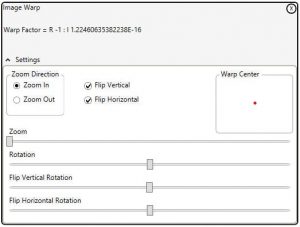

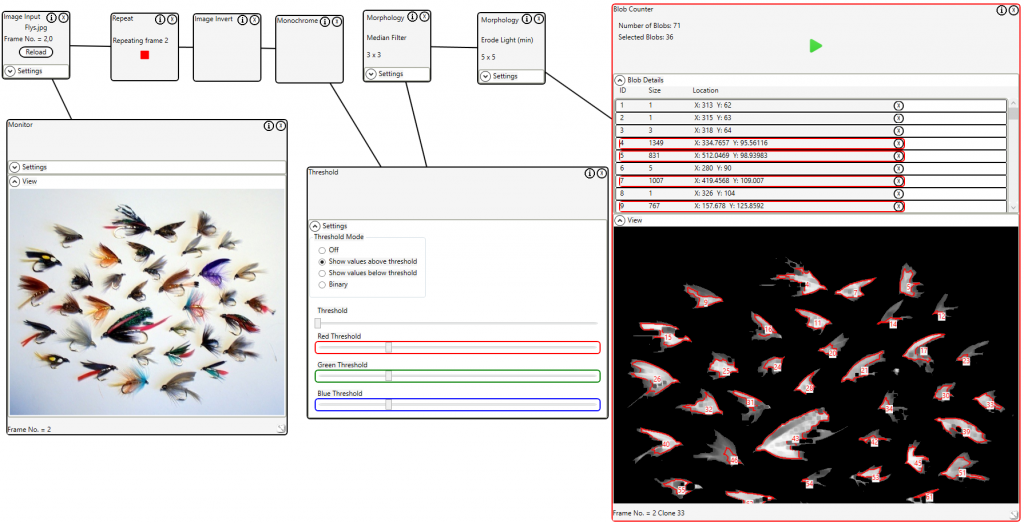

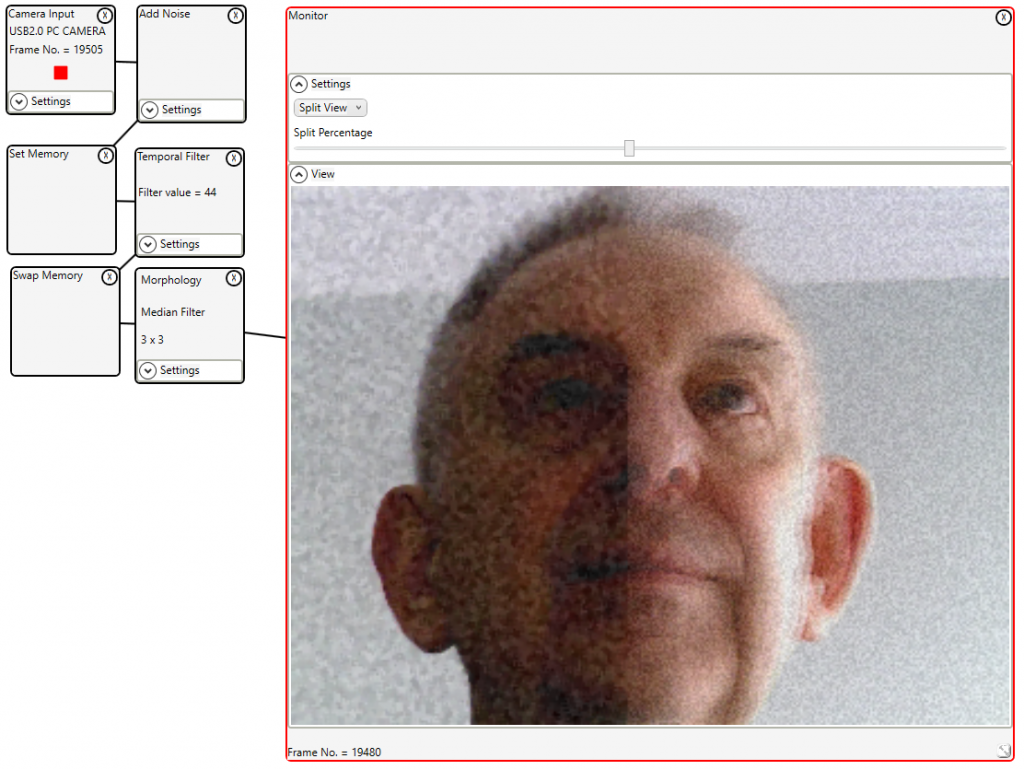

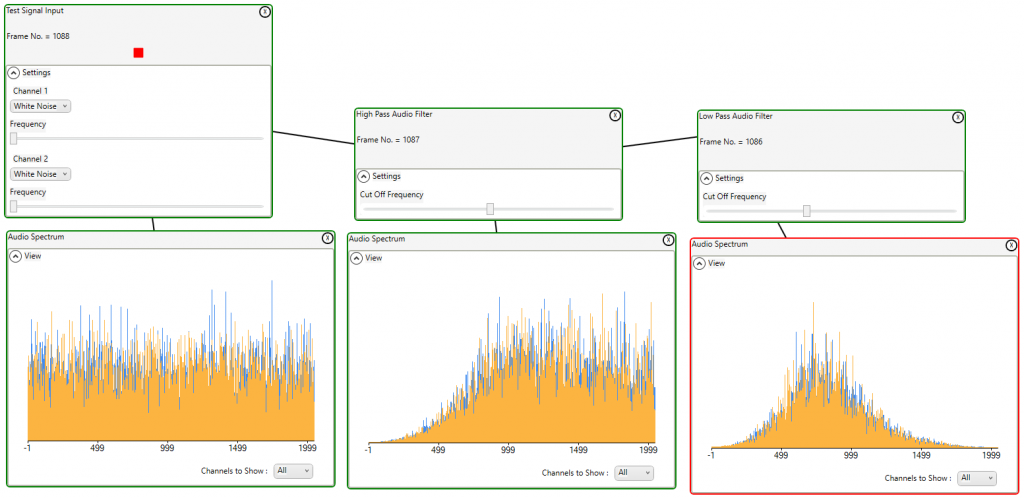

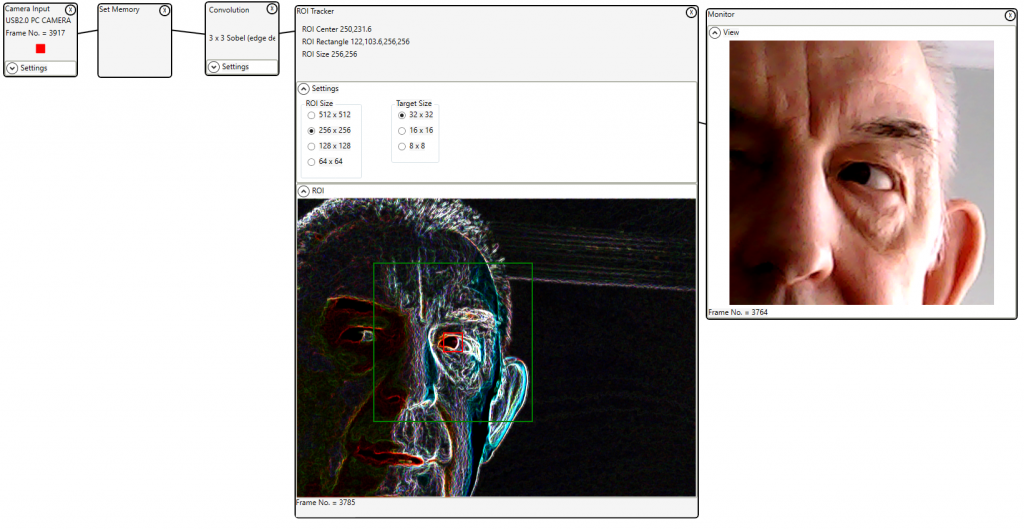

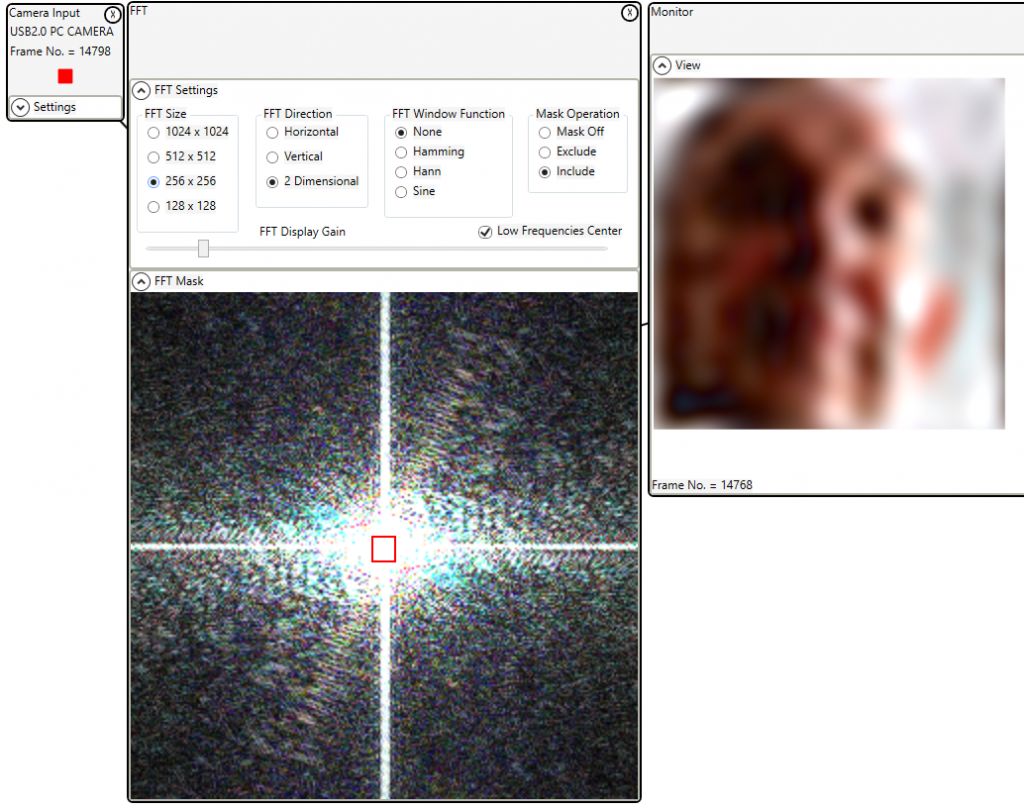

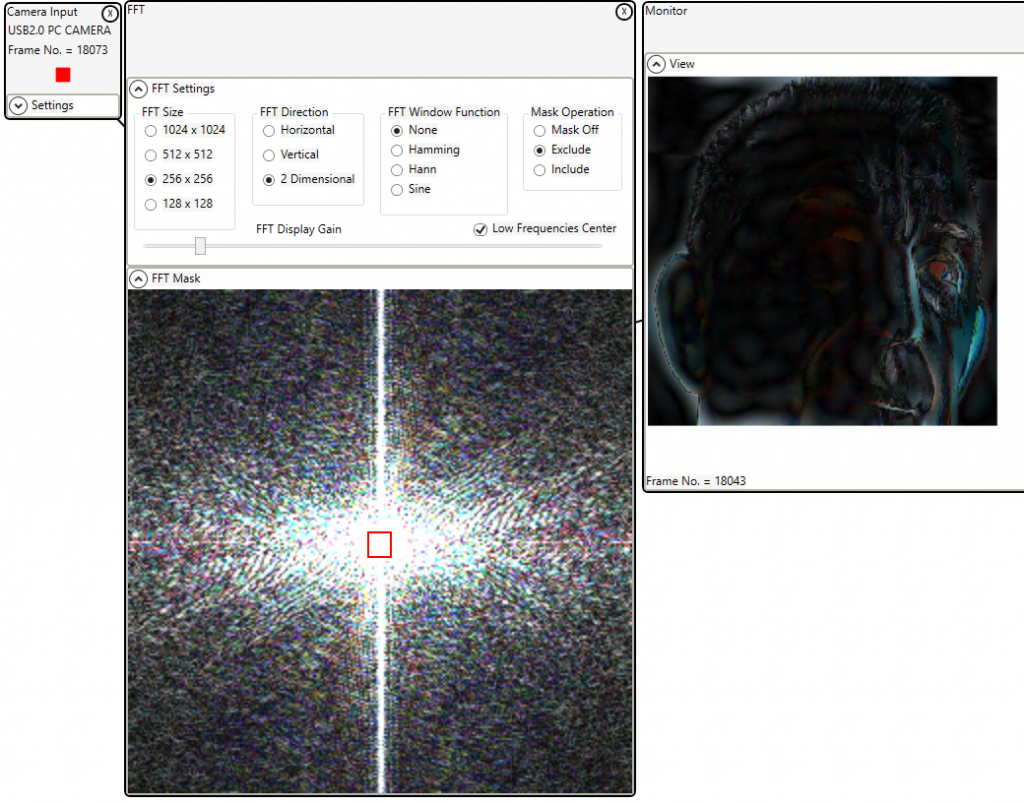

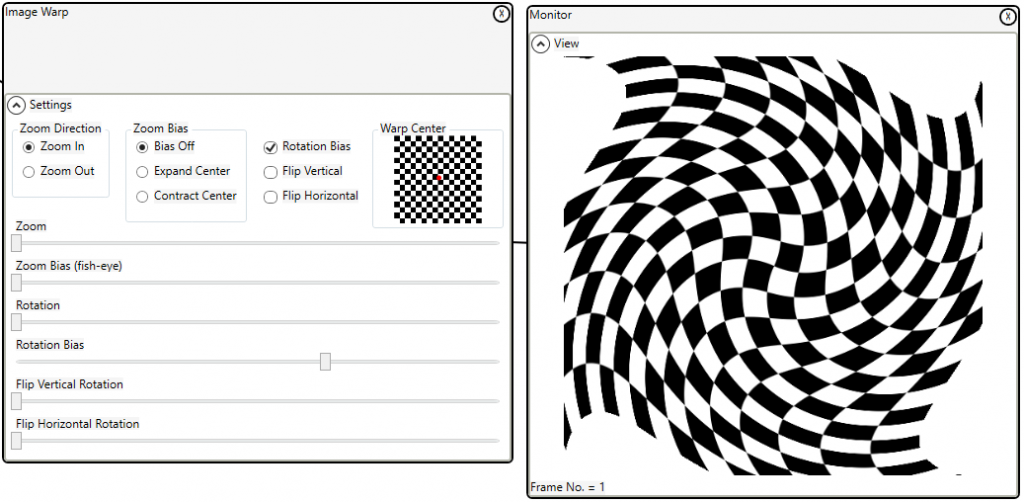

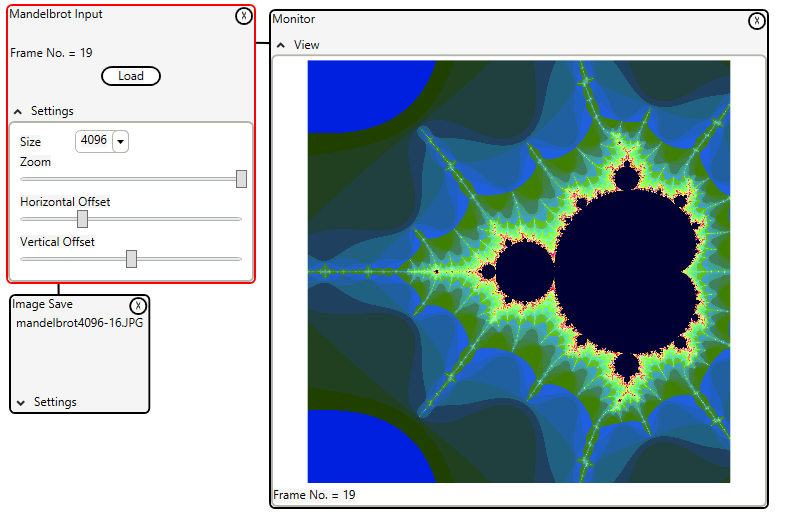

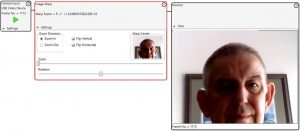

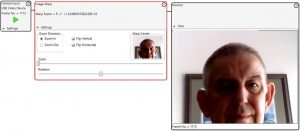

Using the warp control of the Imaging Whiteboard we can perform this 3D transformation.

Flip Vertical, Flip Horizontal, and rotate 180 degrees. The image will return to it’s original not warped position.

So if I am using regular 2D complex numbers to perform the warp, how do I perform 3D warping?

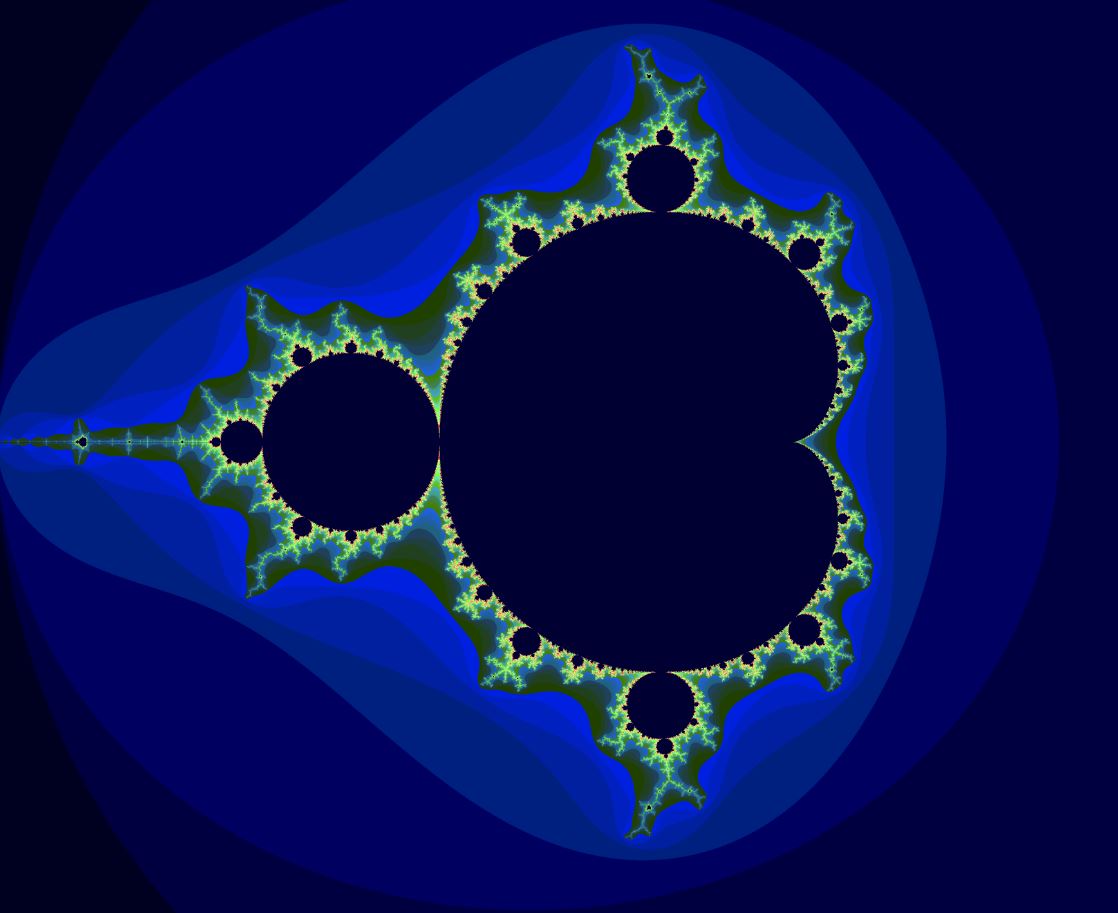

To better explain this I have created a proof of concept that implements full horizontal and vertical rotation. This is not published (I’m not sure that it is useful).

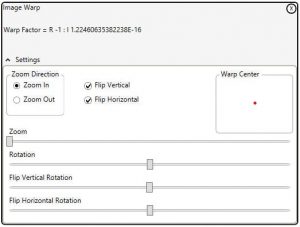

After applying the warp factor:

sourceAddress = targetAddress * WarpFactor;

The result is modified:

sourceAddress.Real = sourceAddress.Real / Math.Cos(fhRadians); //Horizontal rotation

sourceAddress.Imag = sourceAddress.Imag / Math.Cos(fvRadians); //Vertical rotation

Manipulating the real and imaginary components is not really a correct use of complex numbers